Google Has Done It Again with BERT

Let's get some context. A few days ago, our friend Pandu Nayak, vice-president of Google Search, announced a major bomb, so much that he claimed that we were witnessing the biggest update in the web search engine in years.

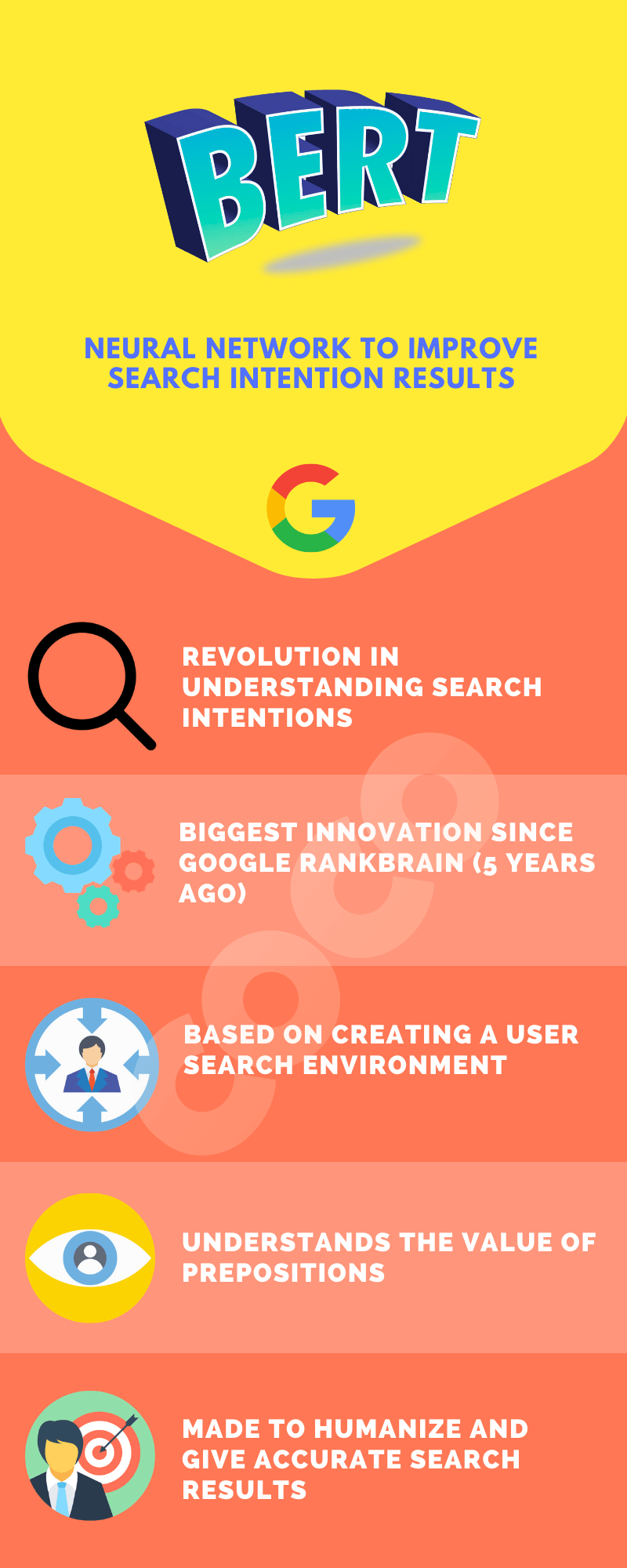

Yes, we are talking about BERT, an open-source neural network with the ability to process natural language in a way previously unknown in the world of artificial intelligence.

Google BERT, whose acronym stands for Bidirectional Encoder Representations from Transformers, is one of the biggest advances in terms of research and innovation in the field of search intentions.

It's probably sounding like Greek to you, but I can assure you that this is going to change the paradigm of how Google understands searches. Has it become more human? First, let's see how it works and then let's try to draw conclusions together.

BERT is the biggest change since Google introduced RankBrain five years ago, and it's a change in more than 10% of search results.

As always, we do our calculations, and we may or may not be wrong, but we want to give you our vision of how Google BERT will affect the SERP.

How Does BERT Works?

Before Google interpreted the search intentions as a succession of words, respecting their order and throwing different resulting depending on this, BERT now is able to process the search intent as a relationship between words.

This means that it associates the appearance of words together with others to a specific search intention.

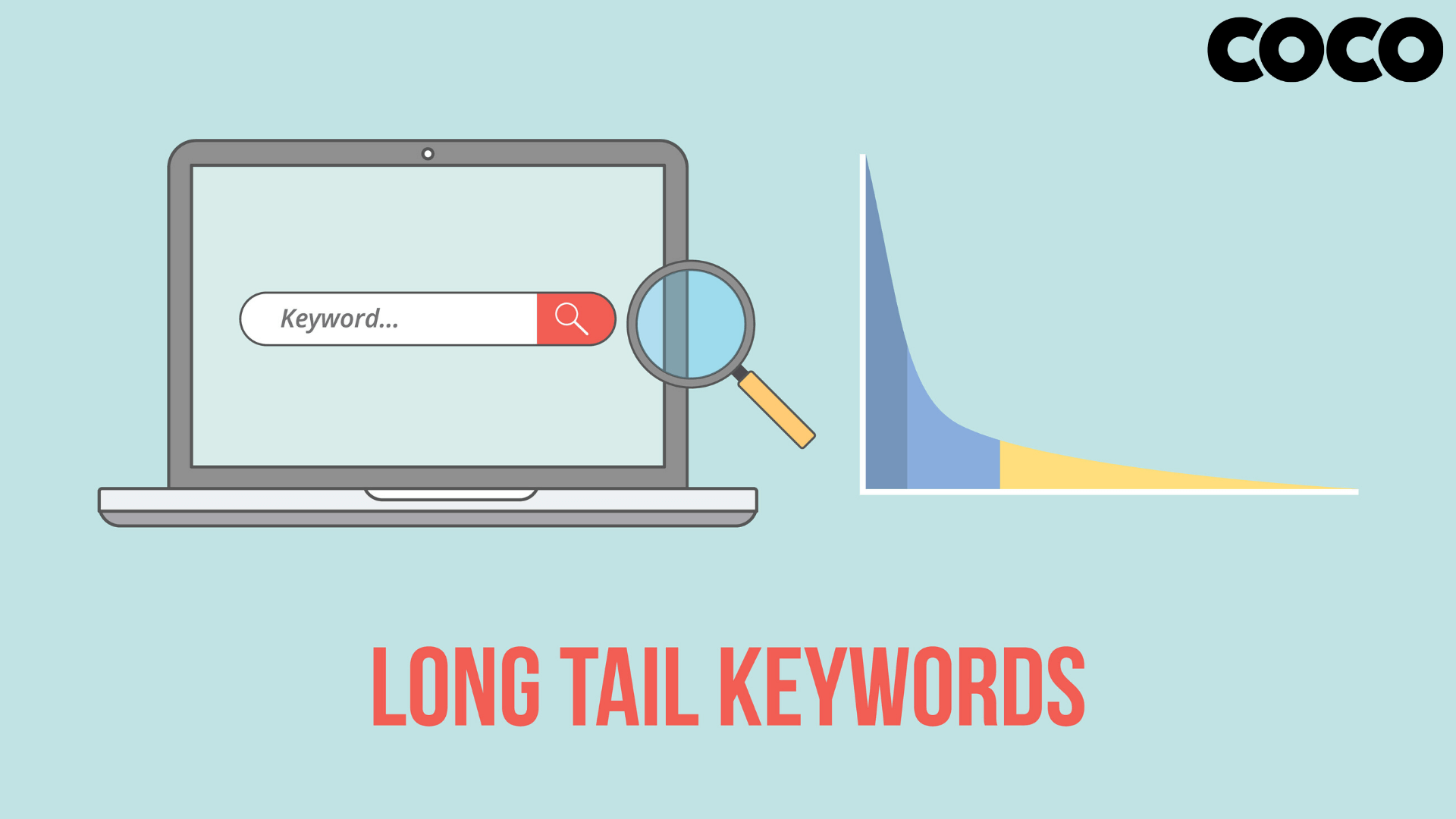

Do you know what this means? This is an ideal tool for longtails, long queries or exact searches, as it has the ability to find precisely what we are looking for.

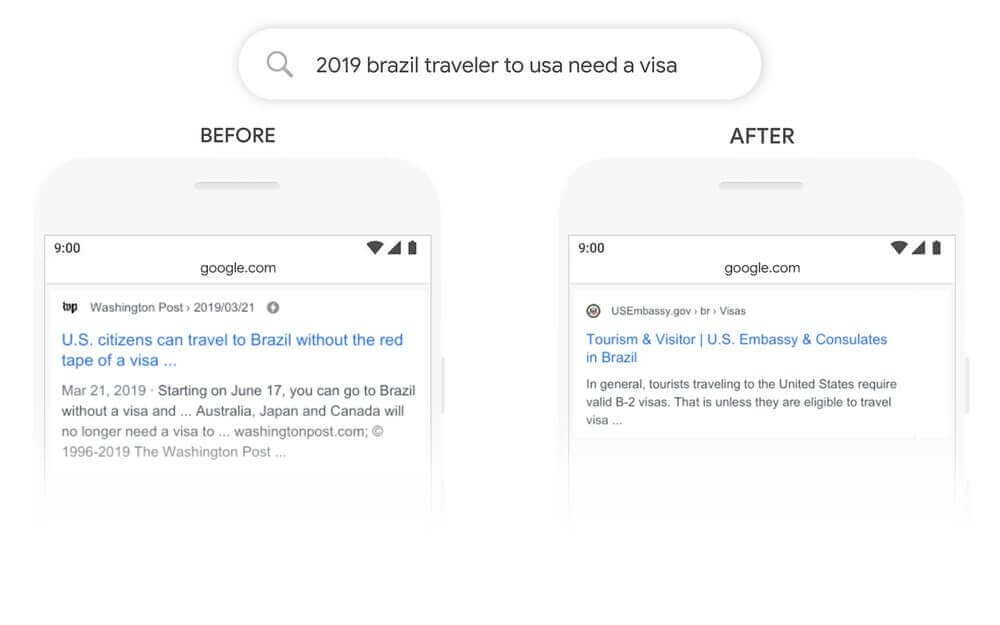

Google BERT is able to identify the value of prepositions in searches, differentiating the meaning between a premise with the same nouns but different prepositions.

And when we talk about prepositions, we mean ‘for’ or ‘by’ used very often to search for information.

The clearest example is given by Google itself in its statement on BERT:

“Here’s a search for 2019 brazil traveler to usa need a visa. The word ‘to’ and its relationship to the other words in the query are particularly important to understanding the meaning. It’s about a Brazilian traveling to the U.S., and not the other way around. Previously, our algorithms wouldn't understand the importance of this connection, and we returned results about U.S. citizens traveling to Brazil. With BERT, Search is able to grasp this nuance and know that the very common word ‘to’ actually matters a lot here, and we can provide a much more relevant result for this query”.

Google has done it again, and it is only the beginning because despite years of training the neural network to respond in this way to searches. It’s important to keep in mind that more than 15% of daily searches are new. We are in a universe in constant expansion.

Who Is Affected by BERT?

The first question I asked myself when I heard about BERT was if BERT is yet another representation of Google's intentions to anticipate what we want to look for.

Google knows that we have gotten used to it and that many times its prediction is used to carry out a search that brings us closer to what we want. Google wants us to search without fear, without our pulse trembling when carrying out a search.

What does this mean? Well, we must continue to take care of the content, we must continue to verify the information and, above all, we must get into the user's skin when it comes to structuring information.

We imagine webmasters doing empathy exercises to understand the search intention of their potential users who can go to their websites. We see an ecosystem of headers based on common questions and we see a huge optimization of FAQs.

It affects SEOs and copywriters, and therefore companies that have put themselves in the hands of professionals. Obviously, if they have done a good job they have nothing to worry about.

Forewarned is forearmed. And Google was already warning with its Medical Update and Florida 2.0. Now comes an era of content and information, and Google wants to ensure that we will find everything we can look for.

Where Is BERT Working?

Google BERT is currently only operating in the United States, but there is speculation that it will work in other countries in the coming months after the first contact with the US market.

When will it arrive in Europe? We don't know, but at Coco Solution, we've been preparing for this moment and the SEO team in parallel to the editors have worked to create a type of valuable content that we believe will not be affected by BERT but on the contrary, will be benefited.

The Future of Keywords… in Danger?

We've gone into social networks to read all sorts of opinions about Google BERT and all coincide in something – will keywords run out?

Before you start feeling nervous, stop, breathe and read us calmly... The term keyword will not disappear, but will evolve and change... As long as people continue to put this type of searches, those keywords without prepositions and ordered within that orderly chaos that we have become accustomed and that characterizes the SEO.

What is going to matter is the thin content and the fast content. Google will be in charge of answering the short questions from the same SERP and we are going to have to work the content in an incredible way to appear both in the first results and in the rich snippets.

Google's war with fake news has moved to another level with BERT, as the battle moves to search intentions, where it will try to re-educate us and humanize search intentions.

In short, this is what it is. We do not know much more, but we assure you that we will continue to update this article from our blog as we understand this precious, useful and innovative neural network called Google BERT even more.